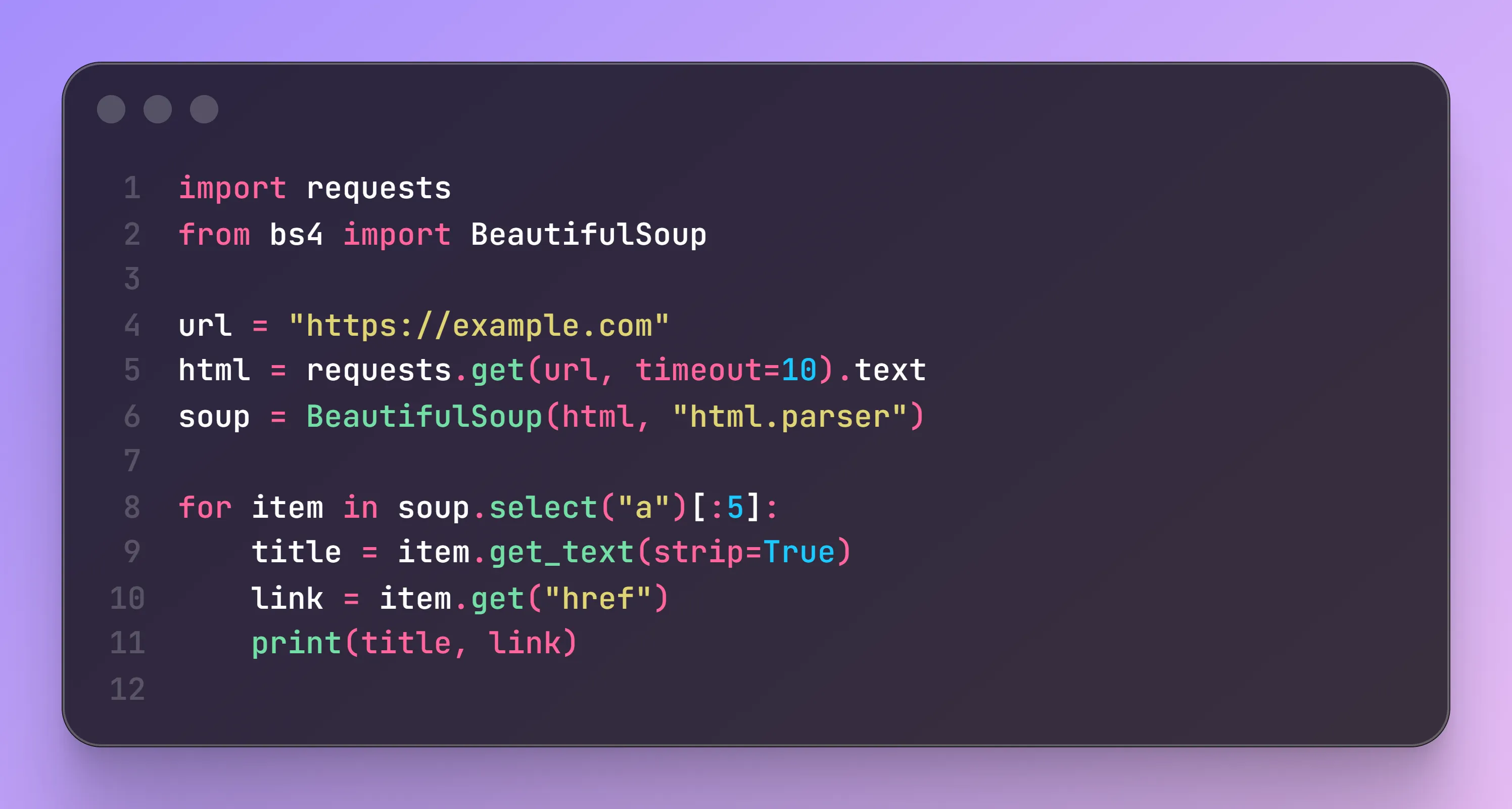

Custom Crawlers & Automation

Production-grade crawler and automation solutions built with Python: stable, efficient, and scalable for complex business workflows.

Tailored crawler architecture for your business scenarios, including dynamic pages and API parsing.

Automate extraction, cleaning, export, and scheduled execution to reduce repetitive manual operations.

Supports account login, cookie/session management, and common anti-bot mechanisms for stable operation.

Source code, executable package, or deployment-ready solution based on your operational requirements.

Built for complex extraction and automation

We provide engineering-grade crawler and automation development for projects requiring high complexity, long-term operation, and strong reliability.

Beyond making data collectable, we ensure your system can run continuously and predictably in production.

Capability Overview

- Language: Python

- Scenarios: Websites / App APIs / Mini-programs

- Scope: Data extraction, login flow, anti-bot handling, cleaning, export automation

- Runtime: Local machine / server deployment / scheduled tasks

- Delivery: Source code / executable package / deployment guide

Core Capabilities

Custom crawler development

- Crawler logic designed for specific site structures

- Handles complex pagination, nested structures, and dynamic rendering

- Supports API reverse engineering and endpoint extraction

Automation workflow design

- Scheduled and batch execution

- Automated Excel / CSV / JSON export

- Automated organization and classification of data

Login and anti-bot handling

- Credential-based login flow

- Cookie/session persistence

- Basic CAPTCHA/slider handling

- Request frequency control and proxy support

Stability and maintainability

- Exception capture and retry logic

- Structured logs for troubleshooting

- Modular code architecture for future expansion

Typical Use Cases

- Long-term enterprise data monitoring

- Continuous e-commerce product/review collection

- Public sentiment monitoring and content tracking

- Complex data acquisition for research projects

- Private/local deployment data systems

We deliver more than temporary scripts — we build production tools that can run long term.

See it in action

From requirement analysis to final delivery, we build stable and maintainable crawler systems.

Ready to turn data into decisions?

Tell us your data requirements and get a practical, production-ready solution.